Introduction

Extreme content on YouTube dates back to nearly the beginning of the platform. The Southern Poverty Law Center has been highlighting the issue since 2007. The first major advertiser revolt over content concerns on the platform happened a decade later, after an investigation by The Times of London about major brands’ ads running on videos produced by Islamist extremist and neo-Nazi groups.

In the wake of the boycott, YouTube introduced a new set of “brand safety” controls designed to prevent ads from running against hate and other “potentially objectionable content.”

Advertising-keyword blocklists maintained by third-party advertising agencies are controversial. News websites have complained that they unfairly hurt their bottom lines by preventing revenue on articles about LGBTQ issues and the Black Lives Matter movement.

See our data here.

And there has been considerable research and analysis into demonetization, which is when YouTube prohibits specific videos or channels from running ads. For instance, a research project conducted by the people behind the YouTube channels Sealow, Nerd City, and YouTube Analyzed uploaded thousands of videos and found that the company demonetized those with the words “gay,” “lesbian,” and some other words associated with the LGBTQ community. In an article about the research, Vox quoted an unnamed Google spokesperson saying that the company tests to ensure the algorithm it uses for ad blocking isn’t biased and that the company does not “have a list of LGBTQ+ related words that trigger demonetization.”

Our investigation did not focus on demonetization but rather on apparent steps taken by Google to prevent advertisers from deliberately placing ads on YouTube videos the company finds are related to certain words and phrases.

In our first investigation in this series, we found that Google does a poor job of blocking advertisers from targeting hate YouTube videos on the Google Ads portal. It allowed companies to find videos related to more than two-thirds of our list of hate phrases—and we got around the blocks for all but three phrases. (YouTube responded by adding some, but not all, of the words and phrases it missed to its blocklist.)

For this investigation, we sought to learn whether advertisers could use Google Ads to find social justice videos on which to advertise. We became interested in looking at how Google’s advertising portal handled “Black Lives Matter” after noticing, as part of a previous investigation, that the phrase appeared to be blocked in another part of Google’s advertising infrastructure.

“At YouTube, we believe Black lives matter,” YouTube CEO Susan Wojcicki wrote in a message to the YouTube community last June.

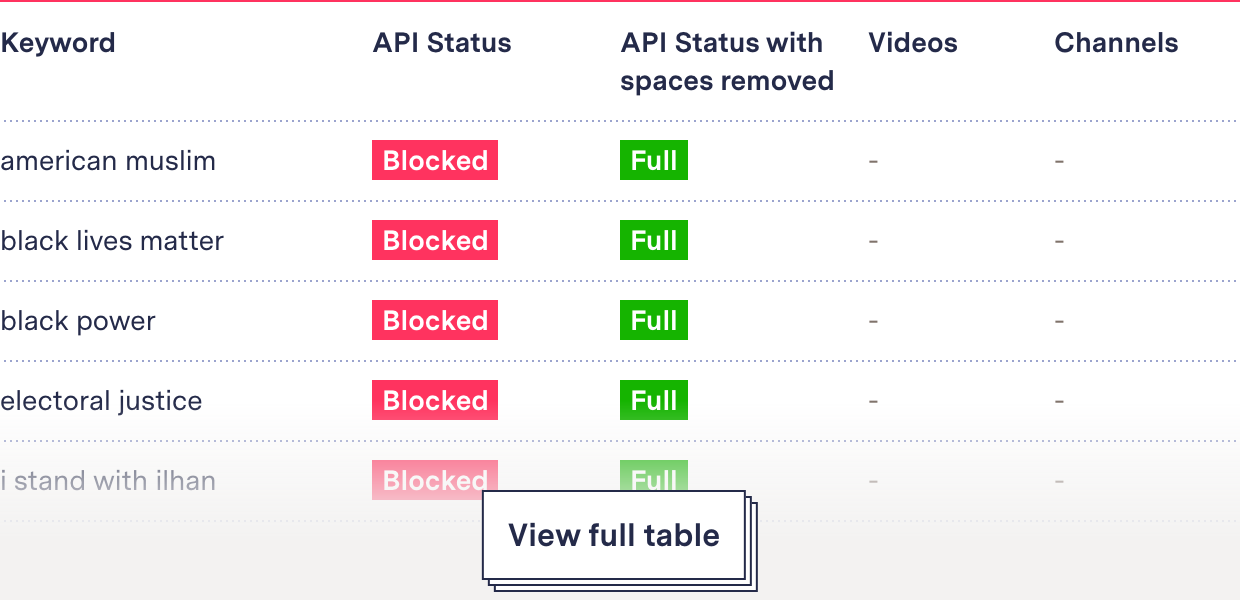

Yet our investigation revealed that YouTube blocked advertisers’ ability to find social justice content, potentially restricting ad revenue for those YouTubers. When we checked in November, Google Ads did not allow companies to find YouTube videos or channels related to more than a dozen terms associated with these movements—including “Black Lives Matter,” “Black power,” and “I stand with Kaepernick.”

More striking, we found this contradicted how the company treated equivalent terms used by the movement’s critics. “All lives matter,” “blue lives matter,” and “White lives matter” could all be used on Google Ads to find YouTube videos and channels on which to advertise.

A video screencapture of the Google Ads Portal. It shows two searches for ad placements on YouTube videos. The first is a blocked response for Black Lives Matter. The second is a full repsonse for 'White Lives Matter'

Further, all social justice keywords on list we used that included the word Muslim were blocked for ad placement searches, including such innocuous phrases as “Muslim fashion” and “Muslim parenting.” Their counterparts, “Christian fashion” and “Christian parenting,” were not blocked when we checked in November—nor were the anti-Muslim hate phrases “White sharia” and “civilization jihad.”

When we approached Google, spokesperson Christopher Lawton confirmed that the company blocks advertisers from using some words for ad-finding in the Google Ads platform and did not take issue with our analysis or methods. But he declined to answer a single question in relation to this methodology or the accompanying article.

Google’s response to this analysis was to extend its Google Ads block to remove advertisers’ ability to find videos for advertisements using the terms “White lives matter,” “all lives matter,” “White power,” “White sharia” and “civilization jihad.”

In addition, Google quietly blocked even more racial and social justice terms and phrases from the Google Ads portal. When we checked our list again after reaching out to Google, we found the company had made 32 more terms unavailable for ad searching, including “Black excellence,” “say their names,” and “believe Black women.”

As a result, Google Ads now blocks advertisers from using 83.9 percent of social and racial justice terms on our list to find videos upon which to place ads, up from 32 percent.

These new blocks did not follow the pattern of previous blocks, but rather Google instead replicated the API’s native responses to words that return no relevant videos, and the API now returns an identical response for the newly blocked terms. In so doing, Google muddied any future efforts to replicate this investigation with other keywords as it has compromised the API’s marker for “no results.”

Methodology

Data Collection

Google Ads API for Ad Placements

We used the same method of data collection and analysis as in our companion investigation on hate terms. We collected our data for this analysis using an undocumented API for ad placements that we found within the Google Ads portal. We ran social justice keywords through that API to identify which terms were available for ad placement and which were blocked (as opposed to Google finding no related videos).

Details on how we were able to discern that results were blocked (rather than Google not finding relevant videos) appear in the methodology for that story, here.

Sourcing Social Justice Keywords

We asked the advocacy groups Color of Change, MediaJustice, Mijente, and Muslim Advocates to independently provide lists of words and phrases related to racial justice and representation. These organizations have led calls for tech accountability and curbing online hate speech. They sent us a total of 175 discrete keywords.

We winnowed down the list by removing names, general terms, obscure terms, and words that are primarily used outside the context of social justice. We reduced it further by running the list through the Google Ads portal and reviewing the first 20 suggested videos for each term (Google shows up to 20 suggested videos and channels for each search initially but continues to load others when a user hits the “more” tab.) If we found no social-justice-related YouTube videos in that first tranche of 20, we removed the term from our list. This is the same process we used to limit our list of hate terms in our companion investigation.

The resulting list was vetted one last time by media manipulation researchers at the Technology and Social Change Project at the Shorenstein Center at Harvard University.

Our final list contained 62 keywords representing slogans, social movements, and terms around identity (full list in “Findings” below).

Findings

What’s Blocked?

Most of the terms on our list could initially be used by advertisers to search for videos, as one would expect. But 17—more than one-fourth—were blocked. Among the blocked terms and phrases were “Black Lives Matter,” innocuous commercial topics including “Muslim fashion,” and the slogan supporting congresswoman Ilhan Omar “I stand with Ilhan.” Another 4.8 percent (three terms) returned partially blocked responses (full channel recommendations and a single invalid video recommendation). These include “reparations,” “colonialism,” and “antifascist.”

White Power vs. Black Power

Comparing these results with the results of our companion study on hate terms revealed troubling differences: “Black power” was blocked for ad placement but not “White power.” “Black Lives Matter” was blocked but not “White lives matter,” “all lives matter,” or “blue lives matter.” However, “Black trans lives matter” and “Black girls matter” were also not blocked.

| Blocked response | Full response |

|---|---|

| black power | white power |

| black lives matter | all lives matter, black girls matter, black trans lives matter, blue lives matter, white lives matter |

We also found that all the terms suggested by advocacy groups that mentioned Muslim were blocked, including “no Muslim ban ever,” “Muslim solidarity,” “Muslim parenting,” “Muslim American,” and “Black Muslim.” Google did not block anti-Muslim hate terms like “White sharia” and “civilization jihad.”

The religious descriptions “Jewish,” “Christian,” and “Buddhist” were also blocked as standalone words. But when we combined them with the word “fashion,” only “Muslim fashion,” one of the terms suggested by advocates, was blocked. “Jewish fashion,” “Christian fashion,” and “Buddhist fashion” were not blocked for advertising when we conducted our study, showing the blanket ban on “Muslim” in combination with any other word did not extend to other religions.

Phrases that mention "Muslim" were blocked even in commercially relevant searches

| Blocked response | Full response |

|---|---|

| buddhist | buddhist fashion |

| christian | christian fashion |

| jewish | jewish fashion |

| muslim, muslim fashion |

When we began to share our findings with experts for reporting—but before we approached Google—this discrepancy was fixed. The company now blocks searches for “fashion” and “parenting” with any of the four religions we checked, though the blocks look different (see details in the “Google Response” section). After we approached Google, we ran other innocuous phrases including religious terms (culture, diet, holidays, history, and conversion) through the API, and all were blocked, though most of the other religious terms returned Google’s new block signal.

Band-Aids

Of the multiword phrases on our list, Google had blocked 17 of them. As we had with hate terms, we found that removing spaces between the words circumvented the block most of the time—in this case for 13 of the 17 phrases.

The three phrases that remained blocked, even without spaces, contained “sex” or “sexual.” The other root words in those three phrases were not blocked on their own. We found that any search containing the letters “sex” together was blocked from ad placement searches, no matter where it appeared in a word or phrase. This shows that YouTube has the capacity to be looser or stricter in its blocks and is exercising that discretion intentionally.

The varying blocking levels we found could result from Google using regular expressions (or regex) to construct patterns to automate text matching. Regex can look for exact words or cast a wide net. It was created for this exact problem: matching partial words, called substrings.

In sum, our analysis for this investigation and the companion investigation into hate terms uncovered that Google exercises various levels of blocking when searching for YouTube videos for ad placements on Google Ads. It sometimes blocks specific terms (“Black power”). In other cases, it blocks words that appear anywhere in a phrase (“Muslim” in “Muslim fashion”). And in the fewest instances, Google blocks portions of words and phrases (“sex” in “sex work” and “sexwork”).

Google Response

Google spokesperson Christopher Lawton confirmed that YouTube uses blocklists and did not take issue with our analysis or methodology. But he declined to answer a single question related to its social and racial justice blocks.

Rather than remove blocks from social justice words, Google extended its block to rejoinders and hate terms that had been available for ad campaigns—“White lives matter,” “all lives matter,” “White power,” “White sharia” and “civilization jihad.”

And the company also quietly blocked 32 more social and racial justice terms, including “Black excellence,” “say their names,” and “believe Black women.” Lawton did not respond when we asked why the company expanded its block on social and racial justice terms.

| Still allowed | Now blocked |

|---|---|

| abolish the police | abolish ice |

| black dissent | americanmuslim |

| black hair | anti-black |

| digital justice | anti-fascist |

| for the culture | antiracism |

| hijab fashion | believe black women |

| no tech for ice | bipoc |

| oscars so white | black august |

| patriarchy | black excellence |

| tell black stories | black girls matter |

| black identity extremists | |

| black in tech | |

| black is beautiful | |

| black liberation | |

| black panthers | |

| black trans lives matter | |

| blacklivesmatter | |

| blackpower | |

| civil rights | |

| defund the police | |

| electoraljustice | |

| end police brutality | |

| i can't breathe | |

| istandwithilhan | |

| istandwithkaepernick | |

| lgbtq+ | |

| movement for black lives | |

| muslimamerican | |

| muslimfashion | |

| muslimparenting | |

| muslimsolidarity | |

| no justice no peace | |

| no new jails | |

| nomuslimbanever | |

| qpoc | |

| queer | |

| racial injustice | |

| racial justice | |

| repeal the ban | |

| say her name | |

| say their names | |

| standwithilhan | |

| white fragility | |

| whitesupremacy | |

| wypipo |

And these new blocks do not return the API response we’d previously identified. Rather, Google instead replicated the API’s native responses to words that return no relevant videos, copying it as the API’s response for the newly blocked terms and compromising the ability to audit the Google Ads blocklist in the future.

Conclusion

Despite YouTube’s promises to support Black creators, its parent company bans important racial justice terms—including Black Lives Matter, Black power, and Black Muslim—from searches for ad placements but allowed “White lives matter,” “White power,” “White nationalists,” and other retorts and hate phrases.

A third of the 62 racial and social justice words and phrases on our list were blocked in searches for videos for ad placements when we checked in November. After we reached out to the company, it blocked even more phrases. Google now blocks 83.9 percent of the phrases on our list of social and racial justice terms for use by advertisers to locate videos for their ads.

The religious term “Muslim” resulted in blocks by association, including blocking keywords with commercial appeal like “Muslim parenting” and “Muslim fashion.” This was different from how Google Ads treated other religious keywords. The treatment of Muslim social justice terms was also different from how Google treated anti-Muslim hate terms, including “White sharia” and “civilization jihad,” which were available to search for videos for ad placements when we conducted our investigation.

After we approached Google, the company also blocked all the hate phrases that we pointed out were in conflict with the social and racial justice ad blocks. Google began blocking innocuous phrases with other religious words during our reporting, after we began conducting interviews in which we revealed our findings to third parties.

Acknowledgements

We thank Jeffrey Knockel of Citizen Lab, Robyn Caplan of Data & Society, and Manoel Ribeiro of the Swiss Federal Institute of Technology Lausanne for comments on an earlier draft of this methodology. We would also like to thank members of Color of Change, MediaJustice, Mijente, and Muslim Advocates for submitting keywords to create the lists behind our reporting, and Brandi Collins-Dexter of the Technology and Social Change Project at the Shorenstein Center at Harvard University for reviewing the keyword list.