This article was copublished with WIRED.

Crime predictions generated for the police department in Plainfield, New Jersey, rarely lined up with reported crimes, an analysis by The Markup has found, adding new context to the debate over the efficacy of crime prediction software.

Geolitica, known as PredPol until a 2021 rebrand, produces software that ingests data from crime incident reports and produces daily predictions on where and when crimes are most likely to occur.

We examined 23,631 predictions generated by Geolitica between Feb. 25 to Dec. 18, 2018 for the Plainfield Police Department (PD). Each prediction we analyzed from the company’s algorithm indicated that one type of crime was likely to occur in a location not patrolled by Plainfield PD. In the end, the success rate was less than half a percent. Fewer than 100 of the predictions lined up with a crime in the predicted category, that was also later reported to police.

Diving deeper, we looked at predictions specifically for robberies or aggravated assaults that were likely to occur in Plainfield and found a similarly low success rate: 0.6 percent. The pattern was even worse when we looked at burglary predictions, which had a success rate of 0.1 percent.

“Why did we get PredPol? I guess we wanted to be more effective when it came to reducing crime. And having a prediction where we should be would help us to do that. I don’t know that it did that,” said Captain David Guarino of the Plainfield PD. “I don’t believe we really used it that often, if at all. That’s why we ended up getting rid of it.”

Guarino noted that concerns about accuracy and the department’s general disinterest in using the software suggested that the money paid for Geolitica’s software could have been better spent elsewhere.

“We do have a mentoring program,” Guarino said. “We could probably put [the money] toward that, for the summertime. We used to have about 80 kids.” A contract between the department and Geolitica listed a $20,500 subscription fee for its first annual term, and then $15,500 for a yearlong extension.

We sent our findings and methodology to Geolitica prior to publication. Representatives did not respond to multiple requests for comment.

Show Your WorkPrediction: Bias

How We Assessed the Accuracy of Predictive Policing Software

We found that Geolitica’s crime prediction algorithm had a success rate of less than 1% in Plainfield, New Jersey

A report from Wired last week revealed that Geolitica is ceasing operations at the end of this year. SoundThinking, a law enforcement technology company previously known as ShotSpotter, has hired Geolitica’s engineering team, but not its senior leadership, and is in the process of acquiring some of its intellectual property. Geolitica’s current customers are being transitioned into SoundThinking’s patrol platform.

“We believe that offering our law enforcement partners the ability to deploy their limited staffing resources has tremendous merit and that when fully integrated into Resource Router, these highly complementary assets will create a best-in-class deployment system for agencies of all sizes,” SoundThinking Senior Vice President Sam Klepper wrote in a statement to The Markup.

Information about the shutdown and customer transition to SoundThinking was not posted on Geolitica’s website or social media accounts. SoundThinking also purchased HunchLab, another predictive policing company, from Azavea in 2018.

Klepper explained that SoundThinking plans on incorporating some of Geolitica’s algorithms into its own existing systems, but “the algorithms mentioned do not include any crime prediction modeling source code at all. SoundThinking did not purchase any of Geolitica’s crime prediction technology or source code.”

Plainfield Officials Said They Never Used the System to Direct Patrols

In 2021, The Markup published an investigation in partnership with Gizmodo showing that Geolitica’s software tended to disproportionately target low-income, Black, and Latino neighborhoods in 38 cities across the country. Our investigation was based on data we downloaded in January 2021 from an unprotected cloud storage server linked from a page on the Los Angeles Police Department’s website. That server stored predictions Geolitica had provided to police departments in dozens of cities across the country over nearly three years. Access to that server was later restricted.

Our investigation stopped short of analyzing precisely how effective Geolitica’s software was at predicting crimes because only 2 out of 38 police departments provided data on when officers patrolled the predicted areas. Geolitica claims that sending officers to a prediction location would dissuade crimes through police presence alone. It would be impossible to accurately determine how effective the program is without knowing which predictions officers responded to and which ones they did not respond to.

After we requested this information from the 38 departments in our original investigation, only Plainfield provided us with more than one day’s worth of data on officer patrol locations.

We tested Geolitica’s accuracy by comparing the system’s predictions to crime reports, which only capture crimes reported to police, not instances when someone was victimized but elected not to inform law enforcement. The Bureau of Justice Statistics reported that less than half of violent and property crimes were reported to police in 2022. Reporting rates are also inconsistent across demographic groups. The agency found in a 2012 report that Black, Latino, and lower-income crime victims were more likely to report the crime than White people and people from higher-income households.

Plainfield police officers reported visiting 129 of the 23,760 prediction locations provided by Geolitica, according to the data. To conduct our analysis, we filtered out those predictions to sidestep the issue of police presence deterring potential crimes, and compared the rest against a list of crime incident reports obtained through public records requests. Each prediction was assigned to one of the department’s four daily patrol shifts, and each shift lasted 11 hours and 15 minutes. If a crime occurred in that location during the shift period, we counted it as a correct prediction.

Prediction: Bias

Crime Prediction Software Promised to Be Free of Biases. New Data Shows It Perpetuates Them

Millions of crime predictions left on an unsecured server show PredPol mostly avoided Whiter neighborhoods, targeted Black and Latino neighborhoods

The lengths of these prediction windows was a major problem, American University law professor Andrew Ferguson, who authored the book The Rise of Big Data Policing, told The Markup after being informed of our findings. “The timing piece is a flawed system,” he said. “The precision of it was supposed to be having the officers at the right time at the right place, not ‘during an entire day’s shift, there will be crime.’”

Plainfield officials said they never used the system to direct patrols. Instead, officials said all of the instances when the automated monitoring system tagged police vehicles as having visited a prediction location were coincidental overlaps that naturally occurred as officers regularly traveled across the geographically small, largely suburban community. To account for all possibilities, The Markup ran its analysis with the patrolled prediction locations both included and filtered out, and did not see a significant difference in the results.

Records showed that none of the five arrests that occurred in prediction locations during the time period of our analysis could have conceivably been due to officers responding to a Geolitica prediction, which Guarino also confirmed. Similarly, we asked the other 37 departments in our previous investigation if they could point to any arrests that came as a direct result of a Geolitica prediction, but none could. Representatives from neither the National Association of Criminal Defense Lawyers nor the National District Attorneys Association could recall a case going to trial where the arrest came as a direct result of an algorithmic crime prediction.

Despite Plainfield officials’ assertions that the software wasn’t used to inform where officers went, there is a quote on Geolitica’s website from former Plainfield police officer Sgt. Larry Brown hailing the program. “Robbery at a restaurant…PredPol prediction and robbery was 10 ft. away,” wrote Brown. “I train our 100+ officers on how to patrol PredPol boxes and I love our successes.”

In an email to The Markup, Guarino wrote that he wasn’t familiar with the incident Brown, who retired in 2018, mentioned and didn’t “know how Sgt. Brown came to that conclusion.” Multiple attempts to contact Brown were unsuccessful.

Massive Number of Predictions, Relatively Small Number of Crimes

A major problem with Geolitica’s system, as it was used in Plainfield, is that there were a massive number of predictions compared to a relatively small number of crimes. Plainfield, which has a population of around 54,000, averaged around 80 predictions per day for just a handful of crime types, while the maximum number of crimes of any type reported in a single day was 22 during the analysis period. This volume of predictions sits below the median of 107 daily predictions for the 37 other jurisdictions we analyzed in our original investigation. Some larger cities had far more predictions, like Los Angeles, which averaged just over a thousand daily. But there were cities smaller than Plainfield, like Niles, Ill., which has around half Plainfield’s population, with a large volume of daily predictions—231, on average.

I think that what this shows is just how unreliable so many of the tools sold to police departments are.

Dillon Reisman, founder of the ACLU of New Jersey’s Automated Injustice Project

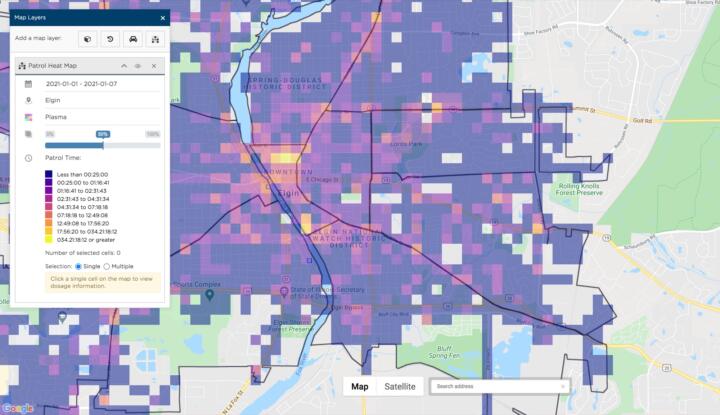

In her 2019 master’s thesis for the Naval Postgraduate School, Ana Lalley, police chief of Elgin, Ill., wrote critically about her department’s experience with the software, which left officers unimpressed. “Officers routinely question the prediction method,” she wrote. “Many believe that the awareness of crime trends and patterns they have gained through training and experience help them make predictions on their own that are similar to the software’s predictions.”

Lalley added that when the department brought those concerns to Geolitica, the company warned that the software “may not be particularly effective in communities that have little crime.” Elgin, a Chicago suburb, has about double Plainfield’s population.

“I think that what this shows is just how unreliable so many of the tools sold to police departments are,” said Dillon Reisman, founder of the American Civil Liberties Union of New Jersey’s Automated Injustice Project. “We see that all over New Jersey. There are lots of companies that sell unproven, untested tools that promise to solve all of law enforcement’s needs, and, in the end, all they do is worsen the inequalities of policing and for no benefit to public safety.”

David Weisburd, a criminologist who served as a reviewer on a 2011 academic paper coauthored by two of Geolitica’s founders, recalls approving their ideas around crime modeling at the time, but warned that inaccurate predictions can have their own negative externalities outside of wasting officers’ time.

“Predicting crimes in places where they don’t occur is a destructive issue,” Weisburd said. “The police are a service, but they are a service with potential negative consequences. If you send the police somewhere, bad things could happen there.”

One study found that adolescent Black and Latino boys stopped by police subsequently experienced heightened levels of emotional distress, leading to increased delinquent behavior in the future. Another study found higher rates of use of force in New York City neighborhoods led to a decline in the number of calls to the city’s 311 tip line, which can be used for everything from repairing potholes to getting help understanding a property tax bill.

“To me, the entire benefit of this type of analysis is using it as a starting point to engage police commanders and, when possible, community members in larger dialogue to help understand exactly what it is about these causal factors that are leading to hot spots forming,” said Northeastern University professor Eric Piza, who has been a critic of predictive policing technology.

For example, the city of Newark, New Jersey, used risk terrain modeling (RTM) to identify locations with the highest likelihood of aggravated assaults. Developed by Rutgers University researchers, RTM matches crime data with information about land use to identify trends that could be triggering crimes. For example, the analysis in Newark showed that many aggravated assaults were occurring in vacant lots.

The RTM then points to potential environmental solutions that come from across local governments, not just police departments. A local housing organization used that New Jersey data to prioritize lots to develop for new affordable housing that could not only increase housing stock but also reduce crime. Other community groups used the crime-risk information to convert city-owned lots to well-lighted, higher-trafficked green spaces less likely to attract crime.

Even Geolitica seems to be moving away from its original core functionality of crime predictions and toward pitching itself as a broader platform for managing police department data. Both Geolitica’s patrol management platform, and the one operated by SoundThinking, advertise that they can incorporate elements of risk terrain modeling into their systems.

The problem with predictive policing is the policing part.

Andrew Ferguson, American University law professor

A pitch presentation, prepared for the police department in San Ramon, Calif., last year and obtained through a public records request, offered three tiers of service the department could purchase, with only the highest-cost option providing “automated daily patrol guidance.” The lower levels offered services designed to help departments better understand their operations, like creating heat maps showing the frequency of officer patrols in each part of a jurisdiction and allowing the department to independently set their own locations for officers to patrol. San Ramon Police Department Records Manager Jessica Simonds told The Markup that the department subscribed to the lowest level, without the automated predictions.

“The problem with predictive policing is the policing part,” said Ferguson. “The data about where crime occurs and where victimization occurs, that’s something that should be studied. But that doesn’t mean that the solution is policing. It might mean the solution is figuring out why people are victimized and funding the solution to that.”