Subscribe to Hello World

Hello World is a weekly newsletter—delivered every Saturday morning—that goes deep into our original reporting and the questions we put to big thinkers in the field. Browse the archive here.

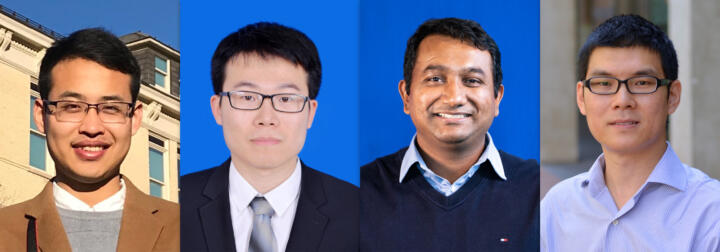

Hot takes on artificial intelligence are everywhere: Depending on where you look, generative AI will either kill us, take our jobs, liberate us from drudgery, or spur tremendous innovation. (Either way, calls for regulation are here.) At The Markup we like to take a measured approach that often, well, includes measuring actual consequences. So I’m thrilled to share this Q&A with associate professor of computer science at University of California, Riverside, Shaolei Ren, who together with his team—Riverside Ph.D. candidates Pengfei Li and Jianyi Yang, and Mohammad A. Islam, an associate professor of computer science at University of Texas at Arlington—recently published a paper quantifying the secret water footprint of AI. While the carbon footprint of emerging technologies has gotten some attention, to truly build toward sustainability, water has to be part of the equation too.

Also, hello! I’m Nabiha Syed, the CEO of The Markup, and I happen to be a person who believes technological advancement can coexist with a healthy planet—if we make that a priority. Keep reading to learn how much water a single ChatGPT conversation might “drink” and tangible steps we can take to reduce the water footprint of AI.

(This Q&A has been edited for brevity and clarity.)

Syed: With very good reason, we’re starting to see more scrutiny of the carbon footprint of various technologies, including AI models like GPT‑3 and GPT‑4 as well as bitcoin mining. But your research focuses on something receiving less attention: the secret water footprint of AI technology. Tell us about your findings.

Ren: Water footprint has been staying under the radar for various reasons, including the big misperception that freshwater is an “infinite” resource and the relatively lower price tag of water. Many AI model developers are not even aware of their water footprint. But this doesn’t mean water footprint is not important, especially in drought regions like California.

700,000

Number of liters of clean freshwater to train GPT-3 in Microsoft’s U.S. data centers, not including electricity generation

Together with my students and my collaborator at UT Arlington, I did some research on AI’s water footprint using state-of-the-art estimation methodology. We find that large-scale AI models are indeed big water consumers. For example, training GPT‑3 in Microsoft’s state-of-the-art U.S. data centers can directly consume 700,000 liters of clean freshwater (enough to produce 370 BMW cars or 320 Tesla electric vehicles), and the water consumption would have been tripled if training were done in Microsoft’s data centers in Asia. These numbers do not include the off-site water footprint associated with electricity generation.

For inference (i.e., conversation with ChatGPT), our estimate shows that ChatGPT needs a 500-ml bottle of water for a short conversation of roughly 20 to 50 questions and answers, depending on when and where the model is deployed. Given ChatGPT’s huge user base, the total water footprint for inference can be enormous.

Then, we further studied the unique spatial-temporal diversities of AI models’ runtime water efficiency—the water efficiency changes over time and over locations. This implies that there’s potential to reduce AI’s water footprint by dynamically scheduling AI workloads and tasks at certain times and in certain locations, the way we reduce our electricity bills by utilizing the low electricity prices during the night to charge our electric vehicles.

Syed: How does this water footprint compare to something like, say, beef?

Ren: We might see from some websites that beef and jeans have a big water footprint, but their water footprint is for the entire life cycle and includes a large portion of nonpotable water. For example, the water footprint of jeans starts from cotton growth. In our study, we only consider the operational water footprint (i.e., water consumption associated with training and running the AI models), whereas the embodied water footprint (e.g., water footprint associated with AI server manufacturing and transportation, including chipmaking) is excluded. If we factor in the embodied water footprint for AI models, my gut feeling is that the overall water footprint would be easily increased by 10 times or even more.

Syed: I’m particularly curious about how carbon reduction and water conservation might be in tension with one another. What do you mean when you ask whether we should “follow or unfollow the sun”?

Ren: Water efficiency mostly depends on the outside temperature as well as energy fuel mixes for electricity generation. Carbon-efficient hours/locations do not mean water-efficient hours/locations, and sometimes they’re even opposite to each other.

Water-conscious users may prefer to use the inference services of AI models during water-efficient hours and/or in water-efficient data centers….

For example, in California, there’s a high solar energy production around noon, and this leads to the most carbon-efficient hours, but around noon, the outside temperature is also high, and hence the water efficiency is the worst. As a result, if we only consider carbon footprint reduction (say, by scheduling more AI training around noon), we’ll likely end up with higher water consumption, which is not truly sustainable for AI.

On the other hand, if we only reduce water footprint (say, by scheduling AI training at midnight), we may increase the carbon footprint due to less solar energy available.

Syed: It’s clear that tech giants like Microsoft, Google, and Amazon are betting big on the future of AI, but are we seeing them make environmental considerations a priority in their development?

Ren: Yes, we’ve been seeing water footprint emerge as a priority in the sustainability reports of several tech giants such as Google, Microsoft, and Meta.

Also, legislators have recently begun to consider the impact of data centers’ water usage on the local environment. For example, in Virginia, where Loudon County is known as the “data center capital of the world,” SB 1078, proposed early this year, would require “a site assessment … to examine the effect of the data center on water usage and carbon emissions as well as any impacts on agricultural resources.”

Most of the industry’s efforts so far have been focused on improving the on-site water efficiency from the “engineering” perspective, e.g., improving the data center’s cooling tower efficiency and processing recycled water instead of tapping into the local potable water resources. Nonetheless, the vast majority of data centers still use potable water and cooling towers. For example, even tech giants such as Google heavily rely on cooling towers and consume billions of liters of potable water each year. Such huge water consumption has produced a stress on the local water infrastructure; Google’s data center used more than a quarter of all the water in The Dalles, Ore. Moreover, many data centers are also located in drought-prone areas such as California.

Our study shows that “when” and “where” to train a large AI model can significantly affect the water footprint. The underlying reason is the spatial-temporal diversity of both on-site and off-site water usage effectiveness (WUE)—on-site WUE changes due to variations of outside weather conditions, and off-site WUE changes due to variations of the grid’s energy fuel mixes to meet time-varying demands. In fact, WUE varies at a much faster timescale than monthly or seasonably. Therefore, by exploiting spatial-temporal diversity of WUE, we can dynamically schedule AI model training and inference to cut the water footprint.

For example, if we train a small AI model, we can schedule the training task at midnight and/or in a data center location with better water efficiency. Likewise, some water-conscious users may prefer to use the inference services of AI models during water-efficient hours and/or in water-efficient data centers, which can contribute to the reduction of AI models’ water footprint for inference.

Such demand-side water management complements the existing engineering-based on-site water-saving approaches that focus on the supply side. Also, our approach is software-based and hence can be used together with any cooling systems for free without particular requirements on the climate conditions or new cooling system installations.

By having more transparency, we’d be able to know exactly when and where we have the most water-efficient AI models.

Syed: You propose transparency as a helpful next step. What questions could greater transparency help us answer?

Ren: By having more transparency, we’d be able to know exactly when and where we have the most water-efficient AI models.

Transparency also makes it possible to measure, benchmark, and improve the AI models’ water footprint, which can be of great value to the research community. Currently, some AI conferences have requested that authors declare their AI models’ carbon footprint in their papers; we believe that with transparency and awareness, authors can also declare their AI models’ water footprint as part of the environmental impact.

With such information, AI model developers can better schedule their AI model training and also exploit the spatial-temporal diversity to better route the users’ inference requests to save water with little to zero degradation in other performance metrics.

Additionally, transparency can also let users know their water footprint at runtime and better their water footprint (say, they might want to defer some nonurgent inference requests to water-efficient hours if possible). Apple has integrated clean energy scheduling into its iPhone products by selecting low-carbon hours for charging, and we hope that water-aware AI training and inference can also be turned into reality in the future.

I hope you found this as thought-provoking as I did! This one hit especially close to home: My dad and sister are both water resources engineers, and I grew up in drought-riddled Southern California. We’re in a technological boom, for sure—but innovation should serve our public good, not obliterate it.

Thanks for reading!

Always,

Nabiha Syed

Chief Executive Officer

The Markup