Update, February 6, 2026

Read about our latest updates to Blacklight.

Blacklight is a real-time website privacy inspector.

The tool emulates how a user might be surveilled while browsing the web. Users type a URL into Blacklight, and it visits the requested website, scans for known types of privacy violations, and returns an instant privacy analysis of the inspected site.

Blacklight works by visiting each website with a headless browser, running custom software built by The Markup. This software monitors which scripts on that website are potentially surveilling the user by performing nine different tests, each investigating a specific, known method of surveillance.

The types of surveillance that Blacklight seeks to identify are:

- Third-party cookies

- Ad trackers

- Key logging

- Session recording

- Canvas fingerprinting

- Facebook tracking

- Google Analytics “Remarketing Audiences”

- X (formerly Twitter) tracking pixel

- TikTok tracking pixel

These are defined later in this document, as are their limitations.

Blacklight was built using the NodeJS Javascript environment, the Puppeteer Node library, which provides high-level control over a Chromium (open-source Chrome) browser. When a user enters a URL into Blacklight, the tool opens a headless web browser with a fresh profile and visits its homepage as well as an additional randomly selected page deeper inside the same website.

While the browser is visiting the website, it runs custom software in the background that monitors scripts and network requests to observe when and how user data is being collected. To monitor scripts, Blacklight modifies various fingerprintable properties of the browser’s Window API. This allows Blacklight to log which script made a particular function call, using the Stacktrace-js package. The network requests are collected using a monitoring tool included in Puppeteer’s API.

Blacklight uses the script data and network requests to run the nine tests described above. Afterward, it closes the browser and generates an instant report for the user.

It records a list of all the URLs that the inspected website requests. In addition, it makes a list of all domains and subdomains that were requested. The tool we provide to the public will not save those lists unless the user chooses to share results with us through an option in the tool.

We define domain names using the Public Suffix + 1 method. We define first-party domain as any domain that matches the website visited, including subdomains. We define third-party as any domain that does not match the website visited. The tool compares the list of third-party domains from the website requests with DuckDuckGo’s Tracker Radar dataset.

This data merge allows Blacklight to add the following information about the third-party domains found on the inspected site:

- Name of the domain’s owner.

- Categories assigned by DuckDuckGo to each domain that attempt to describe its observed purpose or intent.

This additional information about third parties is provided to users as context for Blacklight’s instant test results. Among other things, this information is used to count the number of advertising-related trackers present on a given website.

Blacklight runs tests based on the root URL of the page entered by a user into the tool. For example, if a user types in https://example.com/sports, Blacklight starts its inspection at https://example.com and disregards the /sports path. If a user types in https://sports.example.com, Blacklight starts its inspection at https://sports.example.com.

Blacklight results for each requested domain are cached for 24 hours, and these cached reports are delivered in response to subsequent user requests for the same website during those 24 hours. This is designed to prevent the tool from being used maliciously to overwhelm a website with thousands of automated visits.

Blacklight will also tell users whether their results are high, low, or about average compared with what the tool found on the 100,000 most popular websites as ranked by the Tranco List. This is described in more detail below.

The Blacklight code base is open source and available on GitHub; it can also be downloaded as an NPM module.

There are limitations to our analysis. Blacklight emulates a user visiting a website, but its automated behavior is different from human behavior, and that behavior may trigger different types of surveillance. For instance, an automated request might trigger more fraud detection but fewer ads.

Given the dynamic nature of web-based technology, it is also possible that some of these tests will become out-of-date over time. And new legitimate-use cases for the techniques Blacklight flags could emerge that would not be listed in the tool’s caveats.

For this reason, Blacklight results should not be taken as the final word on potential privacy violations by a given website. Rather, they should be treated as an initial automated inspection that requires further investigation before a definitive claim can be made.

Previous Work

Blacklight is built on the foundation of various privacy census tools built over the past decade.

It runs Javascript instrumentation, which enables it to monitor calls to the browsers’ Javascript API. This is based on OpenWPM, an open-source tool for web privacy measurement built by Steven Englehardt, Gunes Acar, Dillon Reisman, and Arvind Narayanan at Princeton University. It is now maintained by Mozilla.

OpenWPM was used to power Princeton’s Web Transparency and Accountability Project, which monitored websites and services to discover companies’ data collection, data use, and deceptive practices.

Through numerous studies conducted between 2015 and 2019, Princeton researchers uncovered the presence of many privacy-infringing technologies. These included browser fingerprinting and cookie syncing as well as how session replay scripts collect passwords and sensitive user data. One notable example is the exfiltration of prescription data and health-conditions data from walgreens.com.

Five of the nine tests Blacklight runs are based on the techniques described in the Princeton research mentioned above. These tests are canvas fingerprinting, key logging, session recording, and third-party cookies.

OpenWPM incorporates code and techniques from other privacy inspection tools, including FourthParty, Privacy Badger, and FP Detective:

- FourthParty was an open-source platform for measuring dynamic web content that was released in August 2011 and maintained until 2014. It has been used in various studies, including one that describes how websites like Home Depot were leaking their customers' usernames to third parties. Blacklight uses FourthParty’s method to monitor what user information is being sent over the network to third parties.

- Privacy Badger is a browser add-on made by the Electronic Frontier Foundation and released in May 2014. It prevents advertisers and third-party trackers from following people on the internet.

- FP Detective was the first comprehensive study to measure the prevalence of device fingerprinting on the internet. The tool was released in 2013 and was used to conduct large-scale web-privacy studies.

Blacklight’s data analysis was inspired in part by the Website Evidence Collector developed by the European Union’s Electronic Data Protection Supervisor (EDPS). The Website Evidence Collector is a NodeJS package that uses the Puppeteer library to discover how a website collects a user’s personal data. Some of the categories of collected data were chosen by the EDPS.

Other projects that have influenced Blacklight’s development include the Web Privacy Census, conducted at UC Berkeley in 2012, and the Wall Street Journal’s “What They Know” series.

How We Analyzed Each Type of Tracking

Third-Party Cookies

Third-party cookies are a small piece of data that tracking companies store in your web browser when you visit a website. This bit of text—usually a unique number or string of characters—identifies you when you visit other websites that contain tracking code from the same company. Third-party cookies are used by hundreds of companies to build dossiers about users and deliver customized ads based on their behavior.

Popular web browsers Edge, Brave, Firefox, and Safari all block third-party tracking cookies by default, and Chrome has announced that it will phase them out.

What Blacklight Tests

Blacklight monitors network requests for the “Set-Cookie” header and observes all domains that set cookies using the document.cookie javascript property. Blacklight identifies third-party cookies as those whose domains do not match the domain of the website being visited. We look up these third-party domains in DuckDuckGo’s Tracker Radar data to find out who owns them, how prevalent they are, and what kinds of services they provide.

Key Logging

Key logging is when a first or third party monitors the text that you type into a webpage before you hit the submit button. This technique has been used for a variety of purposes, including identifying anonymous web users by matching them to postal addresses and real names.

There are other reasons for key logging, such as providing autocomplete functionality. Blacklight cannot determine the intent behind the inspected website’s use of this technique.

What Blacklight Tests

In order to test whether this is happening on a given website, Blacklight types predetermined text (see Appendix) in all input fields but never clicks on a submit button. It monitors network requests to see if the data that was entered was sent to any servers.

Session Recording

Session recording is technology that allows a third party to monitor and record all of a user’s behavior on a webpage—including mouse movements, clicks, scrolling down the page, and anything you type into a form even if you don’t click submit.

In a 2017 study, researchers at Princeton University found that session recorders were collecting sensitive information such as passwords and credit card numbers. When the researchers contacted the companies in question, most responded quickly and fixed the underlying cause of the data leak. However, the research highlights that these aren’t simply bugs but rather insecure practices that the researchers say should be stopped entirely. Most companies that offer session recording say they use the data to provide their customers—the websites installing the technology—meaningful insights on how to improve a user’s experience on the website. One company, Inspectlet, describes its service as watching “individual visitors use your site as if you’re looking over their shoulders.” (Inspectlet did not respond to an email seeking comment.)

What Blacklight Tests

We define session recording as the loading of a specific type of script by a company that we know to be providing session recording services.

Blacklight monitors the network requests for specific URL substrings that appear only when session recording is taking place, according to a list created by researchers at Princeton University in 2017.

Sometimes key logging is used as part of session recording. In those cases, Blacklight would correctly report the session recorder as both key logging and session recording because we observed both, even though both tests are identifying the same script.

Blacklight accurately detects when a website loads these scripts—but companies typically record only a sample of website visits, so not every user is being recorded on every visit.

Canvas Fingerprinting

Fingerprinting describes a group of techniques that try to identify your browser without setting a cookie. They can identify you even if you block all cookies.

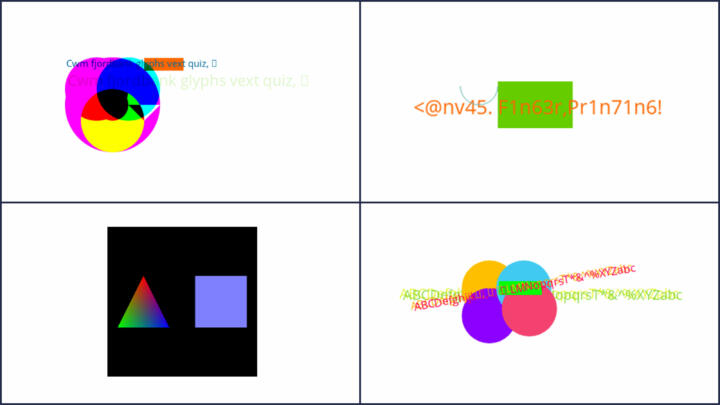

Canvas fingerprinting is a type of fingerprinting that identifies users by drawing shapes and text on a user’s webpage and noting the minor differences in the way they are rendered.

These differences in font rendering, smoothing, and anti-aliasing and other features are used by marketers and others to identify individual devices. All of the major internet browsers, except Chrome, try to counter canvas fingerprinting—either by not fulfilling data requests for scripts known to have engaged in the practice or by trying to standardize users’ fingerprints.

The image below is an example of the type of canvas images used by fingerprinting scripts. These canvases are usually invisible to the user.

What Blacklight Tests

We follow the methodology described in this paper by researchers at Princeton University to identify when the HTML canvas element is used for tracking purposes. The parameters we use to identify canvases that are being drawn for fingerprinting purposes are:

- The canvas element's height and width properties must not be set below 16px.

- Text must be written to the canvas within at least 10 distinct characters.

- The script should not call the save, restore, or addEventListener methods of the rendering context.

- The script extracts and image with a toDataURL or with a single call to getImageData that specifies an area with a minimum size of 16px × 16px.

We have not seen this in practice, but it is possible that Blacklight could falsely label a legitimate use of the canvas that matches these heuristics. In order to account for this, the tool captures the image drawn by the script and renders this in the tool. Users should be able to determine the use of canvas simply by viewing this image. A typical fingerprinting script is shown above.

Ad Trackers

Ad trackers are technologies that identify and collect information about users. These technologies usually (but not always) appear with some level of consent from the website owners. They are used to collect website user analytics for ad-targeting, and by data brokers and other information collectors to build user profiles. They usually take the form of JavaScript scripts or web beacons.

Web beacons are small 1px by 1px images that are placed on a website for tracking purposes by third parties. Using this technique, a third party can determine behaviors, including when a particular user went to a site, their individual IP address, and the kind of browser they used.

What Blacklight Tests

Blacklight checks all network requests against the EasyList and EasyPrivacy lists, which contain URLs and URL substrings that the EasyList project team has identified are used for advertising or tracking. Blacklight monitors network activity for requests made to these URLs and substrings.

Blacklight only records requests made to third-party domains. It ignores any URL patterns in the EasyList or EasyPrivacy lists that match a first-party domain. For example, the Electronic Frontier Foundation, a digital rights advocacy group, hosts its own analytics, which results in requests to https://anon-stats.eff.org, their analytics subdomain. If a user types in https://eff.org, Blacklight does not consider calls to https://anon-stats.eff.org to be a third-party request.

We look up these third-party domains in DuckDuckGo’s Tracker Radar dataset to find out who owns them, how prevalent they are, and what kinds of services they provide. We further filter the list to only include third-party domains that belong to the “Ad Motivated Tracking” categories defined in the Tracker Radar dataset.

Facebook Pixel

The Facebook pixel is a piece of code Facebook created that allows other websites to target their visitors later with ads on Facebook. Common actions that can be tracked by pixel include viewing a page or specific content, adding payment information, or making a purchase.

What Blacklight Tests

Blacklight looks for network requests from the site going to Facebook and looks in the URL query parameters for data that matches the schema of what is described in the documentation for Facebook’s pixel. We look for three different types of data: “standard events,” “custom events” and “advanced matching.”

Google Analytics’ “Remarketing Audiences”

Google Analytics is the most popular website analytics platform in use today. According to whotracks.me 41.7 percent of web traffic is analyzed by Google Analytics. While most of the functionality of this service is to provide developers and website owners with information on how their audience is engaging with their website, the tool also allows the website to make custom audience lists based on user behavior and then target ads to those visitors across the internet using Google Ads and Display & Video 360. Blacklight examines inspected sites for the presence of the tool, not how it is used.

What Blacklight Tests

Blacklight looks for network requests from the inspected site going to a URL beginning with “stats.g.doubleclick” that also contains the “UA-”, “G-”, or “AW-” Google account identifier prefix. This is described in more detail in Google Analytics developer documentation.

Pixels from Meta, TikTok, and X

A pixel is a piece of code created by a company to allow them to track user activity on other websites, such as purchases, items in a shopping cart, or the webpages you visit. This information can be used to change the user experience, including the advertisements served on their web pages or apps.

What Blacklight Tests

Blacklight looks for network requests from sites going to Meta (the parent company of Facebook and Instagram), TikTok, and X (formerly known as Twitter) that are not ‘static’ API requests.

- Meta: We monitor network requests to

facebookURLs that include specific information in theevquery field. We then determine whether relevant requests are “standard” or “custom” events, and if they implement “advanced matching.” We also look in the URL query parameters for data that matches the schema described in Meta’s documentation. - X: We monitor network requests to

twitterURLs that are notstaticAPI requests. (Although the company changed its name from Twitter to X in July 2023, many of its URLs, including the API URL associated with pixel tracking, still carry the nametwitter.) We record information from the request’seventobject, as well as the event description. This is described in more detail in the X Conversion Tracking documentation. - TikTok: We monitor network requests to

tiktokURLs that include aneventobject in the request body. The tool then reports a number of request parameters, including the contents of thebodyobject, and any query variables. This is described in more detail in the TikTok pixel documentation.

2020 Investigation and Survey

To determine the prevalence of tracking technologies on the internet both for context in Blacklight and for accompanying news stories, we ran the 100,000 most popular websites as defined by the Tranco List through Blacklight. We performed this survey in 2020 when we initially launched Blacklight. The data and analysis code can be found on GitHub . Blacklight successfully captured data for 81,617 of those URLs. The rest either failed to resolve, timed out on multiple attempts, or didn’t load a webpage. The percentages listed below are for the 81,617 successful captures.

Some of the analysis goes beyond what appears on the tool. The key findings from our survey are as follows:

- 6 percent of websites used canvas fingerprinting.

- 15 percent of websites loaded scripts from known session recorders.

- 4 percent of websites logged keystrokes.

- 13 percent of sites did not load any third-party cookies or tracking network requests.

- The median number of third-party cookie loads was three.

- The median number of ad trackers loaded was seven.

- 74 percent of sites loaded Google tracking technology.

- 33 percent of websites loaded Facebook tracking technology.

- 50 percent of sites used Google Analytics’ remarketing feature.

- 30 percent of sites used the Facebook pixel.

We classified as Google tracking technology any network requests being made to any of the following domains:

- google-analytics.com

- Doubleclick.net

- Googletagmanager.com

- Googletagservices

- Googlesyndication.com

- Googleadservices

- 2mdn.net

We classified as Facebook tracking technology any network requests being made to any of the following Facebook domains:

- facebook.com

- Facebook.net

- atdmt.com

Limitations

Blacklight’s analysis is limited by four main factors:

- It is a simulation of a user behavior, not actual user behavior, and could thus trigger different surveillance responses.

- The inspected website could be surveilling user activities for benign purposes.

- False positives (possible with canvas fingerprinting): Very occasionally, legitimate uses of the HTML canvas match the heuristics Blacklight uses to identify canvas fingerprinting.

- False negatives: The stack tracing technique used by Blacklight’s Javascript instrumentation might incorrectly attribute a call to a window API method we are monitoring to a library included by a script. For example, if a fingerprinting script uses jQuery to do some calls, jQuery might end up on the top of the stack and Blacklight will attribute the call to that instead of the script that's actually responsible. This possibility was brought to our attention by researchers who reviewed our methodology; we have not seen it occur in our tests or our survey of the 100,000 most popular sites.

Regarding false positives, when Blacklight visits a site, that site can see the request is coming from computers hosted by Amazon’s AWS cloud infrastructure. Because botnets are often run on cloud infrastructure, our tool could trigger bot-detection software on the website, including canvas fingerprinting. This could result in false positives for the canvas fingerprinting test where the purpose of the test is not to track users but rather to detect botnets.

In order to test this, we took a random sample of 1,000 sites from the top websites from the Tranco List that we had already run through Blacklight on AWS. We ran this sample through Blacklight software on our computer locally at a residential IP address in New York City. We concluded that the results of a Blacklight inspection locally are very similar, but not exactly the same, as running it on cloud infrastructure.

Results for Sample: Local Computer and AWS

| Local | AWS | |

|---|---|---|

| Canvas fingerprinting | 8% | 10% |

| Session recording | 18% | 19% |

| Key logging | 4% | 6% |

| Median number of third-party cookies | 4 | 5 |

| Median number of third-party trackers recorded | 7 | 8 |

Not all surveillance activity that is imperceptible to the user is necessarily malicious. For instance, canvas fingerprinting is used for fraud prevention because it can identify a device. And key logging can be used to provide autocomplete functionality.

Blacklight does not attempt to identify the intent of any particular tracking technology it finds.

Nor can Blacklight determine exactly how a website uses the data it collects on a user when loading session recording scripts and monitoring user behavior, such as mouse movements and keystrokes.

Blacklight does not check the terms of use or privacy policies of the websites it visits to see whether they disclose their surveillance activities.

Appendix

Input field values

The table below lists the values we programmed Blacklight to type into input fields on websites. We used the Mozilla autocomplete attribute write-up as our reference. Blacklight also checks for the base64, md5, sha256 and sha512 versions of these values.

| Autocomplete Attribute | Blacklight Value |

|---|---|

| Date | 01/01/2026 |

| blacklight-headless@themarkup.org | |

| Password | SUPERS3CR3T_PASSWORD |

| Search | TheMarkup |

| Text | IdaaaaTarbell |

| URL | https://themarkup.org |

| Organization | The Markup |

| Organization Title | Non-profit newsroom |

| Current Password | S3CR3T_CURRENT_PASSWORD |

| New Password | S3CR3T_NEW_PASSWORD |

| Username | idaaaa_tarbell |

| Family Name | Tarbell |

| Given Name | Idaaaa |

| Name | IdaaaaTarbell |

| Street Address | PO Box #1103 |

| Address Line 1 | PO Box #1103 |

| Postal Code | 10159 |

| CC-Name | IDAAAATARBELL |

| CC-Given-Name | IDAAAA |

| CC-Family-Name | TARBELL |

| CC-Number | 4479846060020724 |

| CC-Exp | 01/2026 |

| CC-Type | Visa |

| Transaction Amount | 13371337 |

Updates

February 6th, 2026: We updated Blacklight to detect X and TikTok Pixels.

July 14th, 2025: We updated Blacklight to continue accurate detection of Google Analytics tracking.

March 21st 2023: The Ad Trackers section was updated to reflect the addition of EasyList as a tool third-party requests are checked against.

Acknowledgements

We thank Gunes Acar (KU Leven), Steven Englehardt (Mozilla), and Arvind Narayanan and Jonathan Mayer (Princeton, CITP) for comments and suggestions on an earlier draft.