A timer started the moment he logged in at his desk. He’d scan a post of a product flagged by someone as counterfeit, search for facts, and decide if the item should be pulled from Amazon’s marketplace. He said he gave the entire investigation about three minutes—and then the timer reset.

“You can smell it on the floor when you get there—the tension and the stress of everyone on this metric system and on this clock,” said the former Amazon investigator on the company’s marketplace abuse team. He left in 2018 and asked to not be named, fearing speaking publicly would hinder his employment in the industry.

He and another former Amazon employee, Rachel Johnson Greer, who left the company in 2017, said the company required them to crank through about 20 review tasks an hour.

Banned Bounty

Quiz: Can You Spot the Banned Amazon Item?

Amazon content moderators have only a few minutes to decide if a product is on the list of nearly 2,000 banned items

“You go faster than 19 an hour so you have breaks,” said Greer, who reviewed customer orders for potential fraud after joining Amazon in 2007. Fall short of your quota, Greer said, and “you get put on a performance improvement plan … and you get managed out.”

The barrage of suspicious cases these former employees rapidly reviewed are indicative of a larger problem: Bad actors are finding their way onto the platform, and it’s not clear Amazon has a workable strategy to put an end to the abuse.

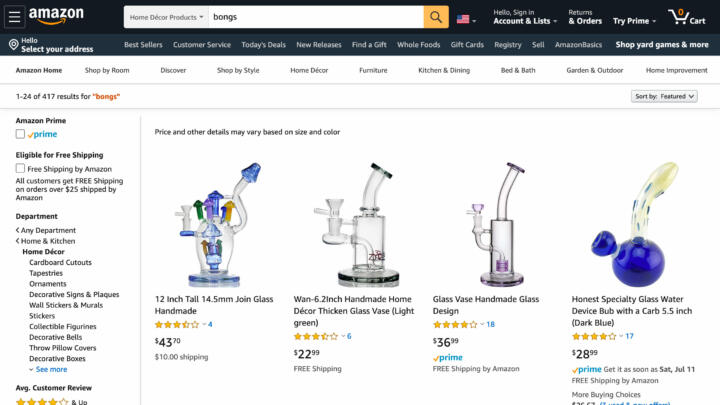

Last month, an investigation by The Markup found that Amazon failed to stop posts of products banned by its own rules. We filled a shopping cart with “marijuana bongs, ‘dab kits‘ used to inhale cannabis concentrates, ‘crackers’ that can be used to get high on nitrous oxide, and compounds that reviews showed were used as injectable drugs.” We provided Amazon with links to each one of our findings, and most were removed.

Amazon is the largest retailer on the planet and an attractive target for fraud and fake review schemes and third-party sellers reportedly hawking dangerous baby car seats, expired food or fake apple chargers labeled as the real thing. Its bookstore, the largest in the world, and its self-publishing business have also come under fire for distributing misinformation and white nationalist content.

YouTube, Facebook, and Twitter are publicly reckoning with calls to improve content moderation and have pledged to expand their armies of review professionals and be more transparent about how much they remove and why. Amazon, in contrast, has remained relatively silent and opaque.

“Social platforms have spent the last four years thinking about how to handle misinformation,” said Renée DiResta, a technical research manager at the Stanford Internet Observatory, “where it doesn’t seem Amazon has.”

Social platforms have spent the last four years thinking about how to handle misinformation, where it doesn’t seem Amazon has.

Renée DiResta, Stanford Internet Observatory

Facebook now has about 30,000 content moderators, and Twitter, a smaller company, doubled its content moderation workforce to 1,500 in recent years, according to The Washington Post. In 2017, Susan Wojcicki, the CEO of YouTube, announced in a blog post that Google, in response to public outcry about offensive and violent content on YouTube, would expand its workforce responsible for reviewing content to more than 10,000 people.

In a written statement to The Markup, Amazon spokesperson Patrick Graham declined to say specifically how many people review content or whether Amazon has increased that workforce in recent years.

Graham said that in 2019, Amazon invested more than $500 million and more than 8,000 employees in “protecting our store from fraud and abuse.” That number could include executives, programmers, attorneys, or other employees. In a follow-up question, Graham declined to specify how many employees specifically moderate content.

When asked about the hourly quota, Graham said the company prioritizes accuracy and measures productivity along with “a variety of dimensions to evaluate an employee’s overall job performance.”

Graham also declined to comment on whether the company supported the Santa Clara Principles, a set of voluntary content moderation practices that include publishing the number of post removals and account suspensions, notifying and providing explanations to impacted users, and implementing an appeal system.

Last year, the Electronic Frontier Foundation reported that Facebook, LinkedIn, Medium, Snap, Tumblr, and YouTube all support the initiative.

In addition, Facebook, Twitter, and YouTube all issue transparency reports, though, as the advocacy group New America noted in its assessment of those reports last year, the companies failed to give a full picture of takedown figures.

Amazon provides even less data to the public than its peers. In a report to Congress earlier this year, the company stated it blocked more than six billion “suspected bad listings from being published.” But it declined to provide information to The Markup on the number of posts flagged, the number of accounts suspended, which rules were violated, or how they were flagged—the minimum information the Santa Clara Principles recommend be made public.

What we do know is that Amazon, like other major platforms, relies in part on automated tools to police its pages. The company says its tools are capable of reviewing hundreds of millions of products in a matter of minutes.

Still, The Markup’s reporting shows Amazon’s system is hardly perfect.

Some sellers appeared to try to evade Amazon’s automated tools with simple tricks: They avoided or misspelled words or incorrectly categorized the listing. In one instance, we found marijuana bongs listed as “vases” in home decor. Many were still up at the time of this writing.

In an email to The Markup at the time, Amazon spokesperson Patrick Graham said the company has “proactive measures in place to prevent suspicious or prohibited products from being listed,” and that sellers are responsible for following the rules and choosing the correct category.

“If products that are against our policies are found on our site, we immediately remove the listing, take action on the bad actor, and further improve our systems,” Graham said.

Nevertheless, experts say, Amazon’s focus on growth makes abuse hard to stamp out entirely.

“Their very business model is flawed … allowing anyone with very little vetting to sell pretty much anything—that’s the fundamental problem, ” said Natasha Tusikov, an assistant professor at York University in Toronto and author of “Chokepoints: Global Private Regulation of the Internet.”

“It’s impossible to weed out all the people who are trying to get around government regulations or defraud people, because you require so very little of them when they sign up,” she said.

Graham disagreed with this claim, stating “Amazon’s seller verification processes are designed to make it easy for honest sellers to set up an account quickly while thwarting attempts by fraudsters.” He added that the company collects data on new sellers and seeks to validate it, in part thorough machines.

Concerns about how well Amazon oversees its content extend to the company’s massive bookstore and publishing arm.

Earlier this year, ProPublica and The Atlantic found about 200 book recommendations curated by and for white nationalists in a reading room were self-published through Amazon.

“Amazon, which in the United States controls around half of the market for all books, and close to 90% for e-books, has become a gateway for white supremacists to reach the American reading public,” the investigation stated.

The article reported that several titles mentioned were removed from Kindle Direct Publishing.

“As a bookseller, we believe that providing access to the written word is important,” Graham said in an email to The Markup, repeating the company’s response in the article. “We invest significant time and resources to ensure our guidelines are followed, and remove products that do not adhere to our guidelines.”

Search for childhood vaccines in Amazon’s bookstore, and anti-vaccination titles are still among the top results.

DiResta, of Stanford, found Amazon’s algorithm listed anti-vaccination literature as a “#1 Best Seller” in the categories such as Emergency Pediatrics and History of Medicine. It wasn’t until the 12th place on the list that a book countering the anti-vaccination point of view appeared, she said.

“Curation algorithms are largely amoral,” DiResta wrote in a 2019 piece for Wired about her findings. “They’re engineered to show us things we are statistically likely to want to see, content that people similar to us have found engaging—even if it’s stuff that’s factually unreliable or potentially harmful.”

Search for childhood vaccines in Amazon’s bookstore, and anti-vaccination titles are still among the top results.

Adelin Cai, formerly of Pinterest and Twitter, said both human review and machine detection are critical in making platforms their best selves. These tools, she pointed out, can scan potentially harmful images “without subjecting [the staff] to a lot of the exposure to bad content.”

But Cai doesn’t think automation will replace human judgment entirely, even as the industry matures and AI advances.

“Nothing supersedes the ability of the human mind to be nimble and understand context,” Cai said.

Just last month, Cai and Clara Tsao, a former Mozilla fellow and chief technology officer of a U.S. government interagency anti-violent-extremism task force, launched the first membership-based professional organization for the field of trust and safety, the Trust and Safety Professional Association. (They also launched a corresponding foundation devoted to education, case studies and research.)

Google, AirBnB, Slack, and Facebook are just a few of the group’s star-studded list of inaugural funders.

Amazon isn’t among them.