Hello, friends,

Earlier this month, Facebook quietly removed the ability of advertisers to target users by race.

It was a huge reversal for Facebook, which has been defending its racial ad categories—which it calls “multicultural affinity”—against charges of illegal discrimination for the past several years.

But there was no fanfare for the announcement. The company published the news on a page promoting “Facebook for Business” rather than its main Facebook newsroom page. The company described the change briefly in a press release touting its “latest efforts to simplify and streamline our targeting options.”

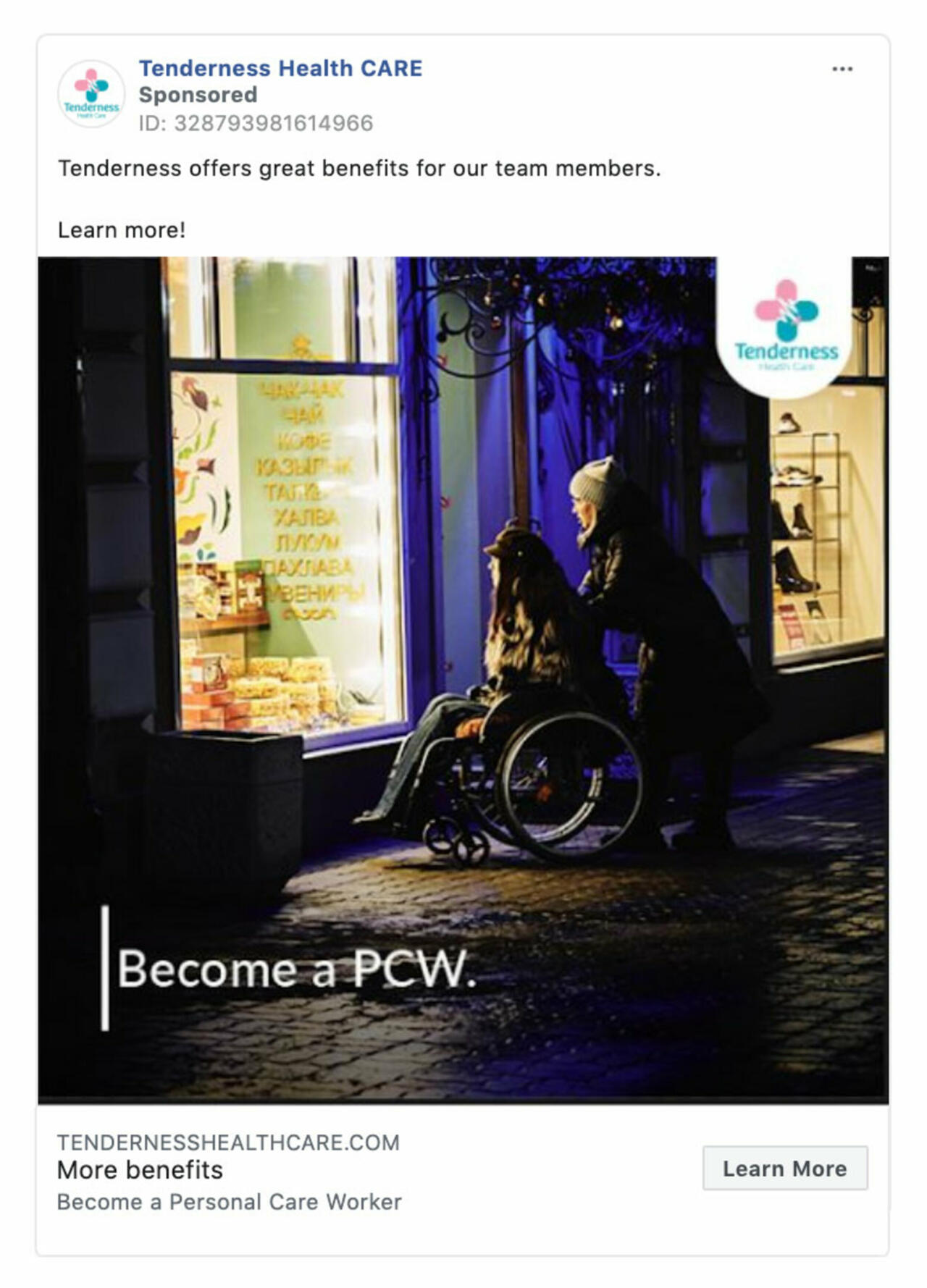

Facebook’s move came a week after The Markup contacted Facebook about a discriminatory job advertisement that appeared on its platform. Investigative data reporter Jeremy Merrill found the ad—which was a job posting for health care workers targeting users younger than 55 years old and whom Facebook had identified as having “African American multicultural affinity.”

Federal law prohibits employers from discriminating on the basis of age and race, including in advertising open jobs. Facebook took down the ad—placed by Wisconsin health care agency Tenderness Health Care—after we brought it to Facebook’s attention.

Unfortunately, this was not the first or even the second or third time that Facebook has been found allowing racial profiling in job, housing, or financial services advertisements on its platforms—all categories in which federal law prohibits discrimination.

For me, tracking Facebook’s discriminatory ads has been a bit of a personal journey. In 2015, when I was working at ProPublica, investigative data journalist Surya Mattu (who is now at The Markup) came to me with an interesting tidbit: He had figured out how to collect all the advertising categories that Facebook put him in.

Playing around with the data, Surya and another colleague, Terry Parris Jr., noticed that they were both assigned to the category “African American ethnic affinity”—even though neither of them is Black. We wondered how many others were assigned to incorrect categories—so Surya built a tool that let people see how Facebook had categorized them.

After we released the tool in 2016, civil rights attorney Rachel Goodman called me and described how Facebook’s racial categories were more problematic than I had envisioned. They could be used to violate federal antidiscrimination laws, she said. So Terry and I decided to test it out: We bought a housing ad on Facebook targeted to be shown only to White people and, shockingly, Facebook approved it.

The Fair Housing Act prohibits discrimination in housing ads. But Facebook told us they considered it to be up to advertisers to comply with the law when placing their ads on the platform.

After the story was published, however, Facebook said it would disable the use of “ethnic affinity” ad targeting for housing, employment, and credit ads.

A year later, I wondered if Facebook had fixed the problem. So my ProPublica colleagues Ariana Tobin, Maddy Varner (who is now at The Markup), and I bought some more discriminatory housing ads—ones that excluded people Facebook categorized as African Americans, mothers, Spanish speakers, etc. And—oops!—the ads were approved.

“This was a failure in our enforcement and we’re disappointed that we fell short of our commitments,” Ami Vora, vice president of product management at Facebook at the time, said then. She promised the company would do better.

Then last year Facebook settled a bevy of lawsuits alleging that it had violated federal antidiscrimination laws and pledged that it would no longer allow advertisers to exclude people of certain genders, ages, and “multicultural affinities” (a renamed version of Facebook’s “ethnic affinities) from seeing housing, job, and financial services ads.

But the settlement still allowed other types of advertising to target people based on “multicultural affinity.” And Jeremy’s reporting seems to indicate that some ads—like the employment one he found—could still slip through Facebook’s system.

And so, perhaps in an admission that it couldn’t effectively prevent its discriminatory usage, Facebook finally removed the multicultural affinity category. Facebook employee Kian Lavi tweeted that he had fought for three years to get the category removed.

“This is a small step in ensuring an equitable internet, free of potential discrimination,” Lavi wrote.

I’m proud that my current and former colleagues have also played a part in removing discriminatory advertising from the internet.

In a world where news articles are often consigned to the dustbin of history hours after they are written, we think that staying on a story and being persistent is one of the most important moves we can make.

As always, thanks for reading.

Best,

Julia Angwin

Editor-in-Chief

The Markup

P.S. Want to learn more about the U.S. antitrust investigations into big tech? Tune in next Thursday at 12 noon ET, when I will be interviewing Barry Lynn, Executive Director of The Open Markets Institute. You can sign up for a reminder and send questions for the Q&A portion to hello@themarkup.org.