When Kurt Wilson, a computer science student at the University of Central Florida, heard that his university was using a controversial online proctoring tool called Honorlock, he immediately wanted to learn more.

The company, whose business has boomed during the pandemic, promises to ensure that remote students don’t cheat on exams through AI-powered software used by students that “monitors each student’s exam session and alerts a live, US-based test proctor if it detects any potential problems.” The software can scan students’ faces to verify their identity, track specific phrases that their computer microphone captures, and even promises to search for and remove test questions that leak online.

One feature from Honorlock especially piqued Wilson’s interest. The company, according to its materials, provides a way to track cheating students through what Honorlock calls “seed sites” or others call “honeypots”—fake websites that remotely tattle on students who visit them during exams.

Wilson pored over a patent for the software to learn more, finding example sites listed. By looking for common code and the same test questions over the past year, Wilson eventually turned up about a dozen honeypots apparently linked to Honorlock, five of which are still operating.

“It kind of became an obsession at one point,” said Wilson, who hasn’t tracked the honeypots in some months but was at one point checking for them daily.

The sites Wilson found are bare bones. They have names like “gradepack.com” and “quizlookup.com.” They’re largely a catalog of thousands of apparent test questions that are sometimes bizarrely specific. “In which part of the digestive system does chemical digestion begin?” one post asks. A multiple-choice question requests using “VSEPR theory to predict the molecular geometry around the carbon atom in formaldehyde, H2CO.”

Click on the “show answer” button below any of the questions and you won’t get help but will be rewarded with a digital chiming noise and no answer. But visitors to the sites are having detailed information about their mouse movements and even typing transmitted to an Honorlock server.

In the patent, recently flagged, along with an Honorlock honeypot site, by student media at Arizona State University, the company explains that its sites can track visitor information like IP addresses as evidence that a student was looking up answers on a secondary device.

I can sum up this activity in one word: entrapment.

Sarah Eaton, University of Calgary

When the pandemic led to shuttered schools, demand for services like Honorlock skyrocketed as educators worried about whether students would be able to easily find answers online using devices that instructors didn’t know about. In its online materials for its software, Honorlock says, “[S]tudents have access to more and more electronic devices and it’s becoming harder for instructors to preserve academic integrity.”

But some experts in the ethics of education worry techniques like honeypot websites simply go too far.

“I can sum up this activity in one word,” said Sarah Eaton, an associate professor at the University of Calgary who studies academic integrity. “Entrapment.”

Capturing Students’ Data

While several companies offer services that tap into students’ webcams to track them, setting up fake sites to catch potential cheaters appears to be an innovation—one that crosses an ethical line for some experts.

Before, students searching online for answers may simply have turned up nothing, while now, a potentially incriminating website will be there to tempt them.

Ceceilia Parnther, an associate professor at St. John’s University who has studied remote proctoring, said the situation is ironic: Students “are being set up” through honeypots, she said, in an attempt to detect academic integrity violations, a practice that’s itself ethically questionable.

“The face-to-face comparison is a teacher walking around with the answer key and putting it on the corner of each desk and then penalizing students if they look over at it,” she said.

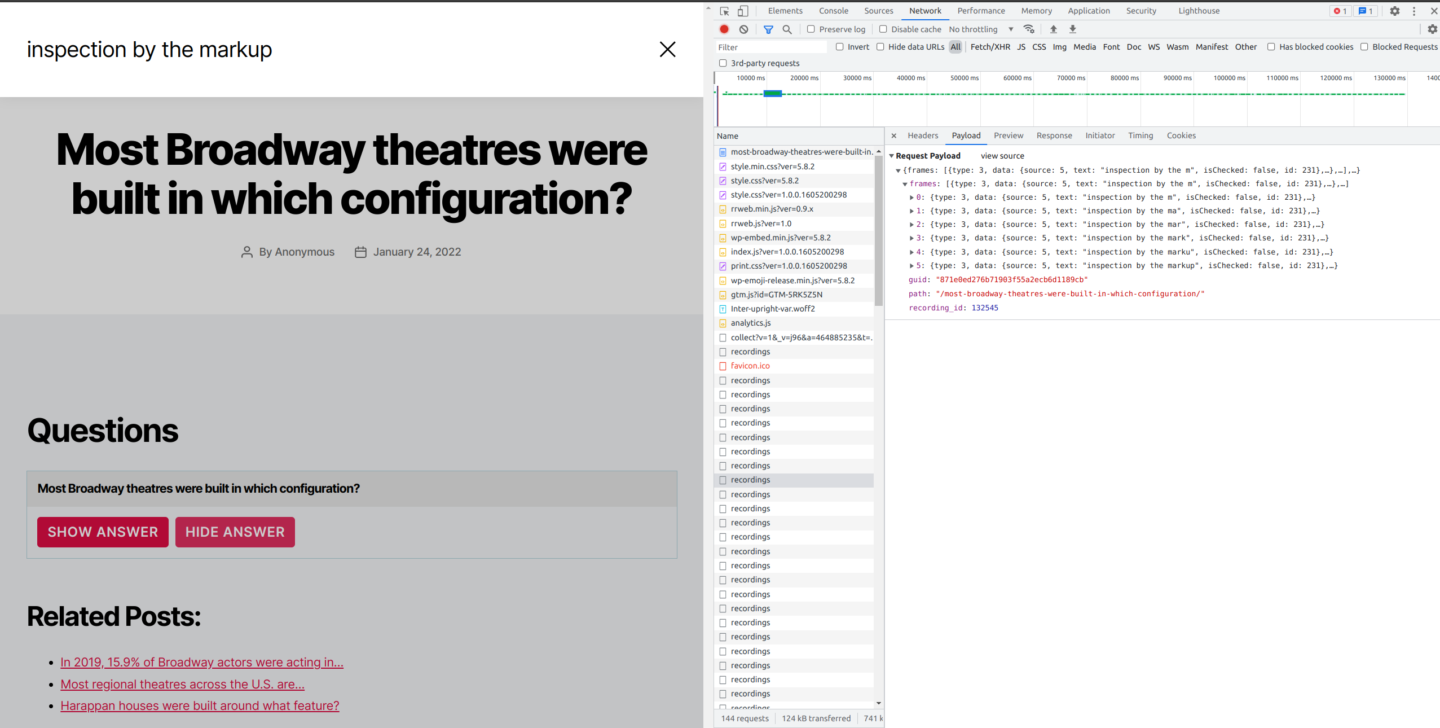

Honorlock is not directly mentioned on the sites Wilson found. But the sites list the same test questions as each other and include terms of service pages that mention the email address “privacy@hlprivacy.com.” The network activity of the pages, reviewed by The Markup, also shows data being sent to an Honorlock.com server.

To understand precisely what data the honeypot sites might be tracking, The Markup inspected the page’s source code and network activity using Firefox’s developer tools for each of the still-operating websites noted by Wilson. We found that the sites captured data on a visitor’s type of device, where that visitor’s mouse was on the page, what they entered into a search bar, and details on what they clicked.

Honorlock didn’t respond to a request for comment on the sites or to answer other questions about its privacy practices.

It’s not clear how many students have been caught by the company’s honeypots. Honorlock touts partnerships with colleges like the University of Florida and University of Maryland, among several others. The company announced last year that, fueled by pandemic-era demand, it had raised $25 million in venture funding, building on a previous round of $11.5 million. Universities around the United States have now signed lucrative contracts with Honorlock, paying hundreds of thousands of dollars per year for the service.

Some educators, meanwhile, are using similar tactics on their own, spreading false or traceable test answers online to catch students who look up answers.

In one case, reported on by student media at Princeton, a professor acknowledged that a mathematics TA there had attempted to catch students cheating by adding a “unique marker” to a wrong solution posted online. The solution included “a reference to a Theorem” that was irrelevant to the answer. The Princetonian reported that several students were accused of cheating based on their response to the question.

Michael Hotchkiss, a spokesperson for Princeton, declined to comment.

Rethinking Exams

Apart from honeypots, proctoring software’s rapid ascent has also given rise to other privacy and ethics concerns. Some students and educators argue the software leads to an anxiety-inducing testing environment, and others have raised technical concerns, pointing to face-detection software that fails to recognize the faces of darker-skinned students.

The fear of an all-seeing proctor can have brutal side effects. One woman went into labor while taking a remote bar exam but continued with the test, afraid of being flagged for cheating.

For educators, the draw of software that promises to automatically track down cheaters is clear. But some experts argue that it isn’t a problem that can or should be fixed by advances in technology to surveil students but a rethinking of how those students are tested in the first place.

Students see that there’s an environment where it’s automatically assumed that they are not to be trusted.

Ceceilia Parnther,

St. John’s University

Pedagogy ethicists like Parnther say this kind of software is backfiring by creating an environment where students are, by default, under suspicion. That mindset itself facilitates cheating, she says, by subtly suggesting to students that they might as well cheat because teachers expect them to anyway. “Students see that there’s an environment where it’s automatically assumed that they are not to be trusted,” Parnther said.

Eaton proposes that educators should consider a more radical rethinking of testing, one that doesn’t rely on surveilling students. Punishing students for using their devices fundamentally goes against how learning works in the age of the internet, Eaton says, and the cat-and-mouse game of sussing out possible cheaters isn’t working. Moving to a better system might mean shifting to more oral or open-book exams, for example, which still demonstrate proficiency without the specter of simply Googling answers.

There will always be some level of cheating on exams, Parnther argues, but the costs of cracking down on students is now coming at the expense of their education.

“While we can’t ensure that no students will ever cheat, we can make it a norm that most students are just trying to do their best work,” she said.

Additional reporting by Surya Mattu.